Guest article by Werner H. Kunz and Khanh Bao Quang Le. This article is a follow-up to our earlier blog article: The Future of Work: Embracing the Collaboration of Human and Digital Employees in Service.

Last year, we wrote (based on our JSR article) about human-digital collaborations in service jobs and explored the potential and the right setup for collaborating with AI tools to lift burdens from frontline staff. Our studies showed that customers appreciate well-orchestrated teamwork between humans and digital employees (Le et al., 2025).

It is a compelling vision in which AI takes on the heavy lifting while we focus on high-level strategy. Boston Consulting Group found that consultants using generative AI completed tasks 12.2% more often, finished them 25% faster, and produced work of 40% higher quality. McKinsey estimates that AI could add around $4.4 trillion to global productivity. For service organizations looking to do more with less, these figures feel like a turning point. And in many ways, they are. Tools like ChatGPT, Copilot, and Claude are no longer just novelties on the fringes of the workflow. They are active participants in how customer service agents write emails, how marketing teams create content, and how analysts summarize reports.

AI complacency – Where the AI Productivity Dreams burst

Yet, generative AI can confabulate. It produces plausible-sounding yet false information; think of the lawyers who were fined after submitting court citations fabricated by ChatGPT or the university that failed an entire class because an AI tool wrongly flagged submissions as fake. When outputs seem polished, employees might stop double-checking them. That’s where productivity gains start to crumble.

–> “The biggest risk of AI isn’t that it gets things wrong – but that nobody notice?”

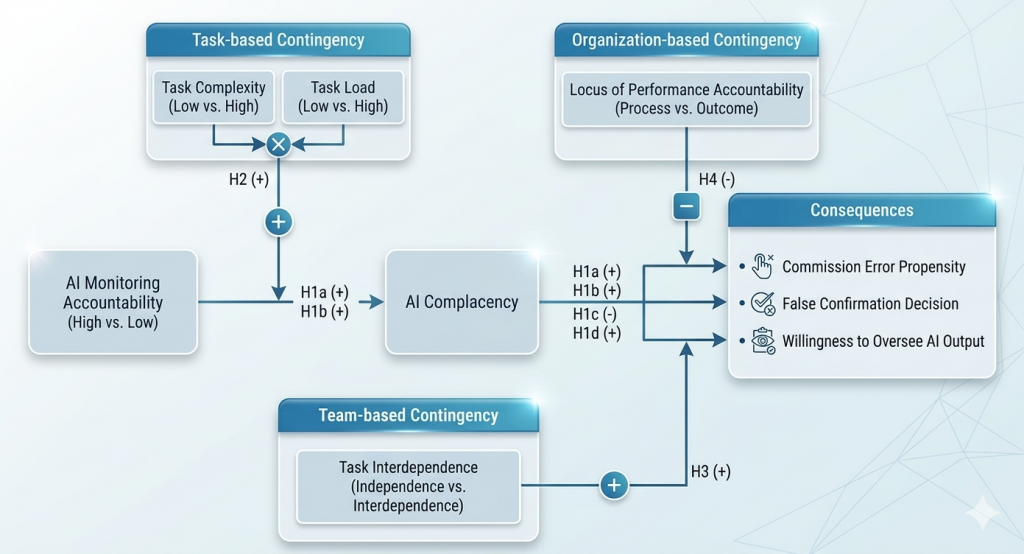

In our new paper “When Humans Stop Thinking,” we call this phenomenon AI complacency (Le and Kunz, 2026). Essentially, it describes employees’ tendency to intentionally skip validating AI-generated content even when errors are present. It’s not about lacking technical skills; rather, the machine’s fluent presentation creates a false sense of security. Over time, employees may fall into a “human-out-of-the-loop” routine. We conducted six experiments with over 1,300 participants, including 160 service workers. The goal was to identify what drives AI complacency and what influences it to worsen or improve. Our main finding is simple but carries significant weight:

The primary driver of AI complacency is not overconfidence in AI, and it’s not a lack of technical skill. It’s the absence of accountability for monitoring.

When people know no one is going to check their work, they are more likely to blindly accept AI output, leading to mistakes and a decreased willingness to review results. Simply put, if no one is watching, why bother double-checking? Furthermore, we examined factors that amplify or lessen complacency. A few patterns from the studies are worth noting:

- The Stress Cocktail: When tasks were cognitively demanding and employees were juggling multiple competing demands, the impact of low accountability on complacency grew significantly stronger. Under pressure, people are especially likely to default to whatever the AI suggests.

- The Team Trap: When tasks were interdependent, the willingness to oversee AI content declined further. The likely mechanism is similar to diffusion of responsibility: someone else will catch it, or so people tend to assume.

- The Wrong Type of Pressure: Organizations that hold employees accountable for outcomes instead of just following procedures tend to reduce the risk of errors. Process-focused accountability, which emphasizes following steps correctly, can create a false sense of thoroughness. Employees check off the boxes, assume the AI has taken care of the rest, and errors still slip through.

Managerial implications: designing for vigilance

If the AI era truly delivers the productivity gains its advocates claim, then complacency poses a real risk to those gains. Errors that reach customers don’t just require rework; in sectors like healthcare, legal, or financial services, they can cause serious harm. In broader service contexts, they can also damage trust and weaken the value that hybrid human-AI teams are meant to provide. It might seem easiest to respond by banning AI or requiring manual checks, but a more nuanced approach is necessary. Our research provides several practical options:

- Build accountability into the process, not just the policy: Employees need to understand that someone, somewhere, will ask them to explain what they reviewed and why they approved it. Incorporating human-in-the-loop protocols and transparent monitoring can prevent complacency. Frameworks like Deloitte’s trustworthy AI model highlight user accountability and offer templates for ethical use.

- Set outcome expectations. When people know they’ll be held accountable for the outcome, not just the process, they stay more alert. Accenture’s enterprise-wide AI compliance program exemplifies this; it establishes clear policies and performance expectations to ensure employees justify their decisions.

- Design for high-complexity, high-load environments specifically. These are the conditions where complacency is most likely to develop. Rotating oversight responsibilities, adding review checkpoints, or simply reducing simultaneous task demands during AI-assisted work can help close the gap. Removing unnecessary steps and standardizing routine tasks free up cognitive resources. IBM’s business automation workflows serve as an example of using automation to reduce the workload, enabling employees to focus their attention where it really counts.

- Take team design seriously. When tasks are highly interdependent, nobody feels fully responsible for the AI’s output. Assigning clear, individual ownership for AI monitoring, even in collaborative workflows, can significantly reduce the risk of errors being overlooked.

These measures are not meant to slow down innovation. Rather, they help ensure that AI fulfils its promise without eroding human judgment.

A More Honest Conversation About Human-AI Collaboration

In recent years, our community mainly debated how to incorporate AI into the workforce and whether employees would accept AI as a colleague. Now, the question seems to be a different one: what kind of colleague does AI turn its human partners into? The answer, if we are not careful, may be a less attentive one. Faster, yes. More efficient in narrow terms, probably. But also somewhat less likely to notice the thing that does not add up, the edge case the model was never trained on, the customer whose situation falls outside the pattern the system learned.

That is not an argument against AI in service work. It is an argument for taking the human side of the equation as seriously as we take the algorithmic side.

For more details on this research, check out the full paper by Le and Kunz (2026).

Werner Kunz

Professor of Marketing – University of Massachusetts Boston

Director of the digital media lab

Khanh Le

Lecturer in Marketing

Auckland University of Technology, Auckland, New Zealand

Reference

- Le, Khanh B. Q, Sajtos, Laszlo, Kunz, Werner H., & Fernandez, K. V. (2025). The Future of Work: Understanding the Effectiveness of Collaboration Between Human and Digital Employees in Service. Journal of Service Research, 28(1), 186–205. https://doi.org/10.1177/10946705241229419

- Le, Khanh B. Q., Werner H. Kunz (2026): When Humans Stop Thinking: Tackling the Silent Threat of AI Complacency in Service Operations, Journal of Service Management, 36 (forthcoming)