Guest article by Felix Eggers & Jochen Wirtz.

In the digital age, technologies bring manifold benefits to consumers, including improved access to information, enhanced service efficiency, convenience, and the emergence of novel digital experiences such as artificial intelligence (AI), virtual and augmented reality, and service robots. These innovations, particularly in information-based services, often come at minimal incremental costs and are increasingly offered as complimentary additions or are advertising-funded, leading to large gains in consumer surplus. Consumer interactions are increasingly linked to data processing, analytics, and machine learning. While this allows personalization and customization for consumers, it also means that information is personally identifiable, which makes consumers more vulnerable.

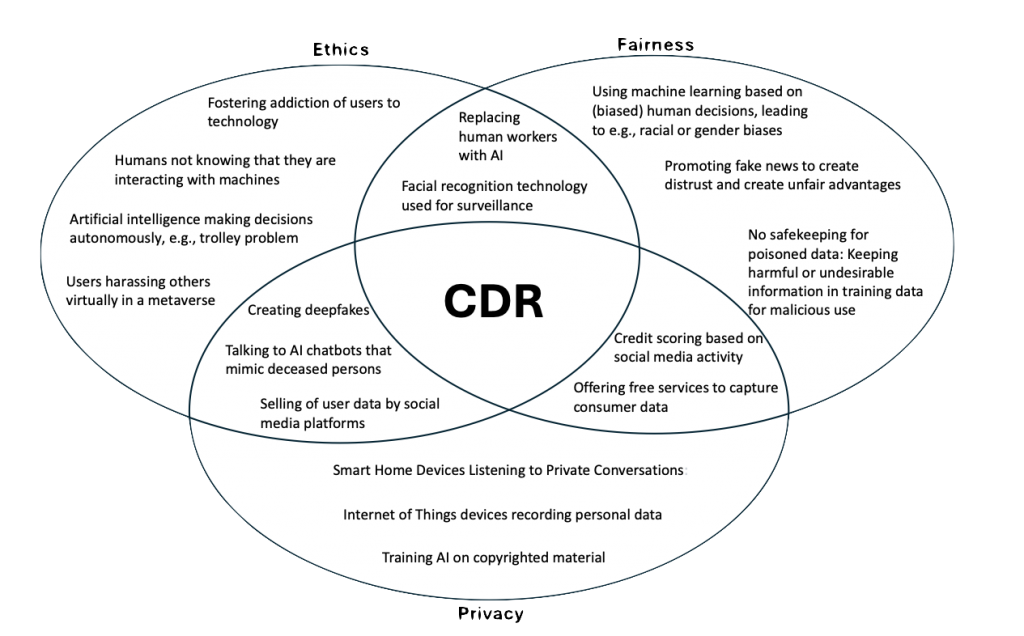

In this regard, digitalization creates serious ethical, privacy, and fairness risks for consumers (Wirtz et al. 2023). To enable corporate digital responsibility (CDR) firms need to embrace these dimensions. Akin to corporate social responsibility, CDR requires a dedicated perspective. For example, while consumers generally agree that environmentally friendly activities in CSR are favorable, in CDR some consumers benefit from algorithmic biases, while others, often minorities, are disadvantaged. Consequently, CDR requires a nuanced trade-off between personal benefits and care for others and needs to raise awareness about negative externalities of technological developments also from a consumer perspective.

We explain each of the three dimensions, i.e., ethics, fairness, and privacy, in the following. The boundaries between these dimensions are not rigid, allowing them to overlap. The following figure shows an overview with more examples.

Ethics

Ethics and morality are the underlying principles that govern how people but also companies should act in any situation. With machines making more and more decisions autonomously without human oversight, such as Generative AI or self-driving cars, the question becomes how technology can act ethically. Teaching machines to adhere to ethical standards, which are often based on tacit knowledge, presents a significant challenge, known as Polanyi’s paradox.

Google Duplex, an AI system capable of conducting natural phone conversations that is not recognizable as a machine, exemplifies the ethical dilemmas in digital innovation. While showcasing technological advancement and potential benefits and time savings for consumers (e.g., by scheduling appointments via phone), it also raises questions about transparency (e.g., should AI identify itself as non-human?), consent (how do individuals consent to interact with AI?), and data processing (may the conversation data be stored and used for further AI training?). Moreover, introduced undoubtedly with good intentions, such technology is also able to mimic celebrities and politicians as deepfakes, allowing the creation of misleading content, manipulating public opinion, impersonating individuals, spreading disinformation, and also making it harder to fight robocalls.

The use of this technology exemplifies the ethical challenges, highlighting the need for ethical guidelines and corporate responsibility to ensure technology serves the public good and does not harm individuals or society. Companies must navigate these ethical waters carefully, implementing safeguards against misuse and fostering an environment of trust and authenticity in digital content.

Fairness

Fairness within CDR is about guaranteeing equitable treatment and non-discrimination across digital offerings, services, and employment. This encompasses ensuring accessibility for all users, developing unbiased algorithms, and fostering equal opportunities within the digital economy. Algorithmic biases can occur at different stages from data collection to algorithmic processing and it is deemed that no bias-free machine learning algorithms exist (Giffen, Herhausen, and Fahse 2022). However, not all algorithmic biases are unfair; it is therefore not the goal to mitigate all algorithmic biases. Instead, companies should mitigate biases that are discriminating based on protected classes, e.g., age, sex, or race, and lead to “algorithmic discrimination” that is not justified by non-protected characteristics (e.g., education and work experience).

For example, in Machine Learning training data may include human biases that the machine is perpetuating, or the training data may be unrepresentative for parts of the population, such as minorities, so that algorithmic decisions are inaccurate. The training data may also be incomplete, such that information about protected classes is missing and discrimination according to these variables cannot be detected. In that case, discrimination may occur due to correlated “proxy” information, for example, ZIP codes. There are numerous examples for such biases, such as Amazon offering same day deliveries for US areas with many prime users, but these ZIP code areas were predominantly white or Rite Aid using facial recognition software to identify shoplifters that often falsely accused consumers, particularly women and Black, Latino or Asian people.

Fairness in the digital domain necessitates diligent efforts to recognize and rectify biases, e.g., via continuous monitoring, transparency, and accountability. As algorithmic fairness is also often related to data, firms need to ensure diversity in data sets and teams developing and managing digital technologies. Avoiding discrimination often necessitates collecting more consumer data, especially from protected groups, to identify and prevent discrimination. However, soliciting this data is complex due to privacy concerns and regulations like the European GDPR (Beke, Eggers, & Verhoef 2018).

Privacy

Data form the basis for algorithmic decision-making such that data and privacy are a core component of CDR. A commitment towards privacy is manifested through transparent data collection policies, secure data storage, and empowering users with control over the use of their personal information.

However, the path to safeguarding consumer privacy is fraught with challenges. Privacy violations emerge in various forms, ranging from intrusive marketing communications to sophisticated data breaches and identity theft, each representing a significant breach of trust between consumers and corporations. Moreover, the undisclosed commercialization of consumer data highlights a glaring violation of privacy rights. While these privacy issues are often already regulated, e.g., via the General Data Protection Regulation (GDPR) in the European Union, this is not the case in the international arena where differing regulatory frameworks present additional hurdles for global corporations striving to maintain consistent privacy standards across borders.

An emerging and contentious issue within this domain is the training of AI systems using copyrighted materials, including user-generated content, without proper authorization—a practice that not only entangles legal ramifications but also raises profound ethical questions. This scenario exemplifies the complex interrelation between privacy, ethics, and the use of data, as the utilization of personal information embedded in copyrighted materials without consent directly infringes upon intellectual property rights and privacy. Such practices underscore the pressing need for a balanced approach that respects both the innovative potential of AI and the imperatives of consumer privacy and data rights, challenging corporations to navigate the delicate balance between technological advancement and ethical responsibility.

Conclusion

These examples bring to the forefront the challenge of embedding ethical principles in technology, ensuring AI decisions reflect societal values and moral standards. Companies are tasked with not only adhering to ethical standards but also actively shaping these norms with their technology to ensure their digital practices promote the well-being of all stakeholders.

Engaging in CDR can be perceived as costly for firms as they need to make a trade-off between commercial interests and individual rights. It requires dedicating time and resources for monitoring and thereby reducing algorithmic efficiency, or refraining from collecting personal information which, in turn, reduces marketing and service effectiveness. However, CDR should not only be seen as a cost. It could be an investment to avoid negative publicity and, if done right, CDR can provide a competitive advantage by positioning the firm at the forefront of CDR, which at least some consumers already actively demand. These potential benefits would be a solid justification for engaging in CDR.

Felix Eggers

Professor of Marketing

Department of Marketing,

Copenhagen Business School

Jochen Wirtz

Vice Dean MBA Programmes and Professor of Marketing

NUS Business School,

National University of Singapore

Reference

Beke, F. T., Eggers, F., & Verhoef, P. C. (2018). Consumer informational privacy: Current knowledge and research directions. Foundations and Trends® in Marketing, 11(1), 1-71.

Van Giffen, B., Herhausen, D., & Fahse, T. (2022). Overcoming the pitfalls and perils of algorithms: A classification of machine learning biases and mitigation methods. Journal of Business Research, 144, 93-106.

Wirtz, J., Kunz, W. H., Hartley, N., & Tarbit, J. (2023). Corporate digital responsibility in service firms and their ecosystems. Journal of Service Research, 26(2), 173-190.