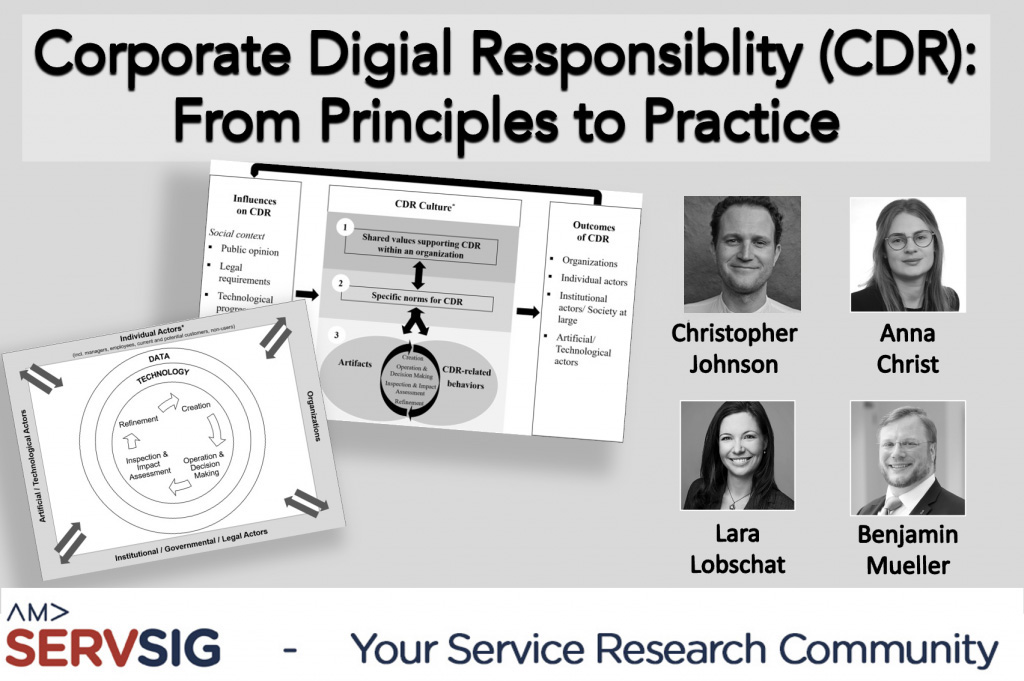

Guest article by Christopher Johnson, Anna Christ, Lara Lobschat, and Benjamin Mueller.

The emergence of CDR

As ever more aspects of human experience are subject to digitalization, we witness transformations that tend to generate both positive and negative outcomes. In part, this is because digital transformations by their very nature create new settings for which “either no policies for conduct […] exist or existing policies seem inadequate” (Moor 1985, p. 266). Consequently, corporations—as foremost actors of digital innovation—often lack any guidance on how the promises of digital technologies and data are to be weighed against their challenges and risks. This situation is compounded as the network of stakeholders working together in digital ecosystems grows in complexity (Stahl 2022). This creates a situation in which the question of who is responsible for the sustainable design and deployment of digital technology and data is often difficult to answer (Broadbent et al. 2015; Lüthi et al. 2023).

In particular, organizations that employ smart technologies and use customer data to optimize their products or services open themselves up to potential ethical risks and crises with serious reputational and financial consequences (Macnish et al. 2019, Wirtz et al. 2023). A recent case involved the iTutorGroup and their integration of AI-supported recruitment software to grow their remote tutoring services. In August 2023 they settled a EUR 339,000 (USD 365,000) legal dispute with the US Equal Employment Opportunity Commission after the software was found to automatically reject female applicants aged 55+ and male candidates over 60.

In response to the challenges arising in the context of the development and deployment of digital technologies and data, many organizations have begun to adopt Corporate Digital Responsibility (CDR) strategies to help steer them through this thorny ethical terrain. This is often accomplished by aligning company values with the normative concerns of the wider public by establishing a ‘set of shared values and norms guiding an organization’s operations with respect to the creation and operation of digital technology and data’ (Lobschat et al. 2021, p. 876). The basic implementation of CDR is the same as CSR, and may be viewed as one part of an overall CSR model. It is a voluntarily established set of policies and self-governing principles, developed, implemented, and overseen by corporations themselves, going above regulations that are mandated by regulations (van der Merwe & Al Achkar 2022, p. 4).

In this blog post, we engage with what we term “the principles to practice gap,” that is, the many challenges well-meaning companies face when attempting to turn their digital responsibility aspirations into concrete actions and behaviors every day. In discussing three perspectives of CDR we established in earlier work (Lobschat et al. 2021)—input, process, output—we identify key inhibitors of CDR that deserve future managerial and scholarly attention. We do so by drawing on anonymized insights from ongoing empirical work by the author team to demonstrate how CDR emerges and some of its potential effects. The following briefly synthesizes the key challenges in an effort to spotlight them for future research in our community.

Input

In this perspective, a key concern for organizations is the question where the foundational norms and values come from that are supposed to direct a corporation’s behavior in the digital age. Responses from our interview partners regarding their motivation for implementing CDR included inputs ranging from legislative requirements, C-level personal involvement or even based on a perceived individual moral duty.

Previous research posits that contemporary ethical norms for assigning responsibility will most likely be insufficient to effectively deal with future digital issues of moral concern (e.g., Mieth 2003; Moor 2006). Therefore, some anticipation may be required because not all norms and structures that are going to matter tomorrow can already be known today (Mueller et al. 2021; Parmiggiani et al. 2020). Taking this into account, CDR can be a useful tool to prepare for ethical dilemmas arising from the (un)intended outcomes of technology and data use as well as for operating in a social environment in which it is near impossible to predict the developmental trajectory of consumer values as well as technological developments. Good CDR approaches recognize that today’s salient issues (e.g., data privacy, cybersecurity) will at some point be replaced by new and unforeseen social and economic challenges depending on input factors such as public opinion, technological progress, changes to the law, or shifting consumer behavior. As such, investing in CDR can help organizations build digital resilience, sensitizing the firm to the fact that they cannot merely rely on the continuation of current socio-ethical-technical trajectories when sketching out their own paths as these are liable to change over time. One particular challenge in this is that traditional perspectives of corporate responsibility tend to mostly account for stakeholders that can easily be represented in outreach activities or advisory bodies. But what about non-users of technology or those AI-based decision support systems are biased against, even though they are not using these systems directly (Lobschat et al. 2021; Mueller 2022)?

Important to note is that CDR is not about generating spot-on predictions of ethical problems in the future, but rather, positioning them in their wider social context thereby expanding the range of decision-making capabilities still subject to our present-day agency. Providing input for a CDR regime is often tricky because corporations need to reach into futures that cannot be known yet (Hovorka & Mueller 2024). In our ongoing data collection, we saw how one organization envisaged the increasing stress on the healthcare sector due to population growth. This has prompted them to take action in the present through measures to increase digital literacy – thereby bolstering their customer’s ability to use next-generation digital health services, alleviating future stress on the healthcare system.

Spotlight: Building a digitally resilient society

According to the digital coordinator of a central European health insurance company, digital solutions are one way to meet the challenge of providing healthcare to an increasing number of people resulting from demographic change. In our recent interview study she stated that in the future; “we need people who are able to use our digital services” as instrumental justification for their investment in a digital competence and learning platform for children “who are [our] future customers”.

The input perspective is crucial for corporations because it provides foundation for good corporate citizenship in the future. Especially corporations that are not “digitally native” need to sensitize themselves, their employees, and the wider community to the impact of digitalization on their business and operating models. Initiatives that seem as simple as “taking our business online” often come with far reaching implications and a careful reflection of these implications is crucial if a larger CDR initiative is supposed to be built on solid ground.

Process

As we have seen, the major challenge is keeping the company’s values aligned with shifts in public opinion and consumer norms and values. How, can a CDR framework help in this regard? In this vein, the focus of the process perspective is on how well-meaning norms and values affect desirable behaviors on a day-to-day basis.

Key in this vein is a careful consideration of processes in relation to roles, rules, and responsibilities (governance). Our ongoing research shows a great diversity in CDR processes with centralized efforts (e.g., the employment of a digital responsibility officer) and decentralized (e.g., providing learning materials for all employees related to digital responsibility) approaches. Furthermore, first insights show a trend towards either a strong formalization of CDR (such as the use of the terminology within the organization) or a more diffuse approach that attempts to ingrain the norms and values into the very fabric of the firm on a more cultural level. Lastly, our data shows that some groups choose to concentrate on CDR-related tools, with one firm employing a tool that automatically calculates ethical risks based on existing project characteristics and suggests possible measures to eliminate them. Another company sees CDR as conversation-based, both internally and externally.

Spotlight: Employing a CDR codex to encourage CDR compliant behavior

As a developer of algorithmic decision-making software, a German software engineering company prioritizes digital responsibility by integrating it into its business model, as articulated in a formal codex they have developed. On of the company’s founders states that the company’s principles of “transparency,” “sensitivity to bias,” and “self-determination” are built into every phase of the value chain, spanning from the strategic to the operational levels.

The process perspective can build on governance research from the corporate social responsibility domain (e.g., Herden et al. 2021). However, a significant stream of CDR research posits that CSR principles cannot simply be transferred to the challenges posed by digitalization (e.g., Mihale-Wilson et al. 2022; Mueller 2022). One of the key challenges corporations face here is the question if their existing CSR regime is capable of helping them translate CDR principles into action. While extant research identifies a couple of interesting approaches (e.g., Wynn & Jones, 2023), current experiences in the field suggest a certain level of skepticism to this end. Both academic research and managerial practice have some catching-up to do.

Output

Even if companies have the inputs and processes all figured out, our experiences suggest that the output perspective constitutes yet another hurdle in bringing CDR to live. This is especially true because the long-term impacts and KPIs related to CDR remain unknown so far, although our initial insights do shed some light on the topic. This lack of quantitative insights on the impacts of CDR on important KPIs makes the justification of investments vis-à-vis shareholders considerably more difficult. One interviewee spoke about CDR contributing to a company culture that “people would want to work for,” highlighting the potential CDR has for talent acquisition and retention.

Spotlight: CDR policies of a pharma company helped decrease employee uncertainty

In our discussions with an international pharma company, the company’s compliance officer for global digital processes elaborated on successful outcomes of investment in CDR policies; “Employees are thanking us for coming up with guidance on topics that were nebulous, or not clear, for example on the use of generative AI at work.” The company has codified a CDR framework, intended to ensure that all employees implement the ethical values and principles of the company in their digital activities.

The spotlight shows that corporations are beginning to build an understanding of why CDR matters and how they can evaluate their efforts. Nonetheless, no universal approach to measuring output is available. The spotlight shows a more qualitative approach that is focused on internal stakeholders. Other companies we work with highlight the need to protect against reputational risks usually associated with data leaks or breaches of confidentiality. Others emphasize regulatory compliance as a key outcome of their CDR initiatives, especially in the context of GDPR. What becomes clear is a two-fold challenge: (1) how to measure and manage the outcome of CDR initiatives, especially in relation to other KPIs (e.g., how much revenue are we willing to sacrifice for more compliant behavior), and (2) what dimensions need to be incorporated in a corresponding measurement and management framework (also in light of the complex ecosystem of stakeholders we discuss earlier) (e.g., Wade, 2020).

In this context, a key question will be whether CDR is understood as just a tool to mitigate risks or whether there are ways to think of it as an emergent opportunity for meaningful differentiation. Many of our conversations indicate that especially future talent and financiers are keen to understand a corporation’s approach to corporate responsibility in a digital age when they make their employment or investment decisions. But developments such as consumer and brand activism or an ever-broader adoption of sustainability certifications highlights the opportunity to set a corporation apart from the competition.

Outlook

Our ongoing work reveals a number of open issues that early movers on CDR currently tackle. Common across all of these is a strong motivation to behave responsibly in a digital age beyond just compliance with policies. In this, the principles to practice gap we postulated at the beginning manifests across our three perspectives: Where do the inputs for a corporation’s CDR regime come from? How are norms and values, once determined, translated into processes that safeguard responsible behaviors? How do me measure and manage the output of these behaviors to check whether we are on track?

What our interactions with CDR professionals reveal is a strong need for research in all three perspectives we discuss. This need becomes especially important as corporations feel the dearly held certainty of a purely financial bottom-line fade away. As we are facing more far-reaching economic transformations than just digital ones, decision-makers are likely to be confronted with more tricky trade-offs among the dimensions of the triple bottom-line. Strong norms and values will be essential to provide guidance in this context, and many of the decisions needed are likely to be connected to issues of digitalization (Zimmer et al., 2023).

As the CDR community sets out to face some of the challenges, we set out above, there are three key themes for future research we propose: First, a renewed attention to the conceptual development that is needed to define and understand what CDR is. This will be essential to not only set CDR apart from related concepts, but to also make sure we know what we are studying when engaging an enormously heterogeneous phenomenon in the field. Second, the building of a broad empirical base. While CDR seems quite popular, the number of corporations going “all in” is still limited. Those that do engage in CDR projects, do so with different foci and at differing paces. Collecting and meaningfully synthesizing these different approaches is going to be difficult, but will be necessary to develop a sound empirical basis on which we can draw. Third, we need to carefully review our research apparatus. Seeing that much of the CDR debate is value-laden and often relates to issues that have not materialized yet (e.g., how do we design technology to avoid a dystopian future; Kane et al., 2021), an engagement with critical and foresight-based research methods is crucial. This does not only entail a necessary upskilling of scholars in this regard, but also a critical discussion of how our institutions can appreciate and evaluate corresponding contributions.

While challenging, these aspects are crucial for meaningfully mobilizing future CDR research that can help develop impactful guidance for corporations as they move into more digital futures. Such guidance is essential if corporations want to successfully translate principles to practice.

Christopher Johnson, University of Bremen

Anna-Sophia Christ, University of Bremen

Benjamin Müller, University of Bremen

Lara Lobschat, Maastricht University

Further reading

— Broadbent, S., Dewandre, N., Ess, C. M., Floridi, L., Ganascia, J.-G., Hildebrandt, M., Laouris, Y., Lobet-Maris, C., Oates, S., Pagallo, U., Simon, J., Thorseth, M., & Verbeek, P.-P. (2015). The Onlife Manifesto. In L. Floridi (Ed.), The Onlife Manifesto – Being Human in a Hyperconnected Era (pp. 6-13). Springer.

— Herden, C. J., Alliu, E., Cakici, A., Cormier, T., Deguelle, C., Gambhir, S., Griffiths, C., Gupta, S., Kamani, S. R., Kiratli, Y.-S., Kispataki, M., Lange, G., Moles de Matos, L., Tripero Moreno, L., Betancourt Nunez, H. A., Pilla, V., Raj, B., Roe, J., Skoda, M., . . . Edinger-Schons, L. M. (2021). “Corporate Digital Responsibility”. Sustainability Management Forum, 29(1), 13-29.

— Hovorka, D. S., & Mueller, B. (2024, January 03-06). Speculation: Form and Function 57. Hawaii International Conference on Information Systems, Honolulu, HI, USA.

— Kane, G. C., Young, A. G., Majchrzak, A., & Ransbotham, S. (2021). AVOIDING AN OPPRESSIVE FUTURE OF MACHINE LEARNING: A DESIGN THEORY FOR EMANCIPATORY ASSISTANTS. MIS Quarterly, 45(1), 371-396.

— Lobschat, L., Mueller, B., Eggers, F., Brandimarte, L., Diefenbach, S., Kroschke, M., & Wirtz, J. (2021). Corporate Digital Responsibility. Journal of Business Research, 122, 875-888.

— Lüthi, N., Matt, C., Myrach, T., & Junglas, I. (2023). Augmented Intelligence, Augmented Responsibility? Business & Information Systems Engineering, 65(4), 391-401.

— Macnish, K., Ryan, M., & Stahl, B. (2019). Understanding Ethics and Human Rights in Smart Information Systems: A Multi Case Study Approach. The ORBIT Journal, 2(2), 1-34.

— Mieth, D. (2003). Ethik der Informatik. In K. R. Dittrich, W. König, A. Oberweis, K. Rannenberg, & W. Wahlster (Eds.), Beiträge der 33. Jahrestagung der Gesellschaft für Informatik (INFORMATIK 2003) – Band 2 (pp. 166-175).

— Mihale-Wilson, C., Hinz, O., van der Aalst, W., & Weinhardt, C. (2022). Corporate Digital Responsibility. Business & Information Systems Engineering, 64(2), 127-132.

— Moor, J. H. (1985). WHAT IS COMPUTER ETHICS? Metaphilosophy, 16(4), 266-275.

— Moor, J. H. (2006). The Nature, Importance, and Difficulty of Machine Ethics. IEEE Intelligent Systems, 21(4), 18-21.

— Mueller, B. (2022). Corporate Digital Responsibility. Business and Information Systems Engineering, 64(5), 689-700.

— Mueller, B., Diefenbach, S., Dobusch, L., & Baer, K. (2021). From Becoming to Being Digital: The Emergence and Nature of the Post-Digital. i-com, 20(3), 319-328.

— Parmiggiani, E., Teracino, E. A., Huysman, M., Jones, M., Mueller, B., & Mikalsen, M. (2020). OASIS 2019 Panel Report: A Glimpse at the ‘Post-Digital’. Communications of the Association for Information Systems, 47, 583-596.

— Van der Merwem Joanna and Ziad Al Achkar (2022), ” Data responsibility, corporate social responsibility, and corporate digital responsibility,” Data & Policy, 4 (12).

— Wade, M. (2020, April 28). Corporate Responsibility in the Digital Era. Retrieved May 12 from https://sloanreview.mit.edu/article/corporate-responsibility-in-the-digital-era/

— Wirtz, Jochen, Werner H. Kunz, Nicole Hartley, and James Tarbit (2023), “Corporate Digital Responsibility in Service Firms and Their Ecosystems,” Journal of Service Research, 26(2), 173-190.

— Wynn, Martin G and Peter Jones (2023), “Corporate responsibility in the digital era,” Information, 14 (324), 1-12.

— Zimmer, M. P., Järveläinen, J., Stahl, B. C., & Mueller, B. (2023). Responsibility of/in digital transformation. Journal of Responsible Technology, available online ahead of print.